You're scrolling through social media when a video of a world leader declaring war flashes across your screen. Your heart races. You share it. Except... it never happened. Welcome to the age of deepfakes, where seeing is no longer believing.

We are living in a technological wonderland where AI can conjure hyper-realistic videos, audio clips, and images from thin air.

One moment, this technology is bringing historical figures back to life for documentaries or helping filmmakers create movie magic on a shoestring budget. Next, it's a weapon, spreading lies at the speed of light, stealing identities like digital pickpockets, or cracking open security systems that were supposed to be foolproof.

How do we catch a fake that's designed to be perfect? What technologies are fighting on the frontlines? And why has this become one of the most critical challenges of our digital age?

Let’s find out.

Key Takeaways

- Deepfakes use AI to create realistic fake media, making it harder to distinguish truth from manipulation.

- Deepfake detection relies on AI and machine learning to spot inconsistencies in visuals, audio, and metadata.

- Resemble AI’s DETECT-2B model delivers advanced, real-time, and highly accurate deepfake detection across formats.

- Top detection tools like DeepFaceLab, FakeCatcher, and Deepware Scanner help expose manipulated content.

- Staying vigilant is key—verify sources, use detection tools, and promote transparency to protect digital authenticity.

What Are Deepfakes?

Deepfakes are media files, such as videos, audio recordings, or images, that have been altered or completely generated by AI to appear as if they are real. Using technologies like Generative Adversarial Networks (GANs), deepfake creators can replace a person’s face in a video or manipulate audio to make it sound like someone else is speaking.

The ability to create hyper-realistic deepfakes has rapidly improved, making it difficult for experts to distinguish them from authentic content. Deepfakes are commonly used in:

- Entertainment: To create special effects or digital avatars of celebrities.

- Misinformation: To spread fake news and manipulate public opinion.

- Fraud and Identity Theft: To impersonate individuals for malicious purposes.

Types of Deepfakes

- Face-Swapped Videos: Overlay a subject’s face onto someone else’s body in motion.

- Lip-Syncing & Audio Overlays: Replace mouth movements to match synthetic or manipulated audio.

- Voice-Only Cloning: Replicate AI voices without visuals, often used in phone scams.

- Full-Body Reenactment: Capture an actor’s entire posture, movement, and gestures and map them onto a different individual.

In a TED Talk, politician Cara Hunter shared a chilling experience, how a deepfake video nearly derailed her career. A fabricated clip showing her saying things she never did spread like wildfire, sparking outrage and confusion. It was a stark reminder of how deepfakes can be weaponized to manipulate public perception and damage reputations, all with frightening realism.

As deepfake technology becomes more sophisticated, the need for reliable detection methods has never been more critical. But how does deepfake detection work? Let’s see.

How Deepfake Detection Works: A Detailed Look

Deepfake detection works by analyzing discrepancies in media files that are typically absent in real-world content. While deepfakes are designed to look realistic, they often contain subtle inconsistencies that can be identified using various detection methods. Let’s break down the key technologies used for deepfake detection:

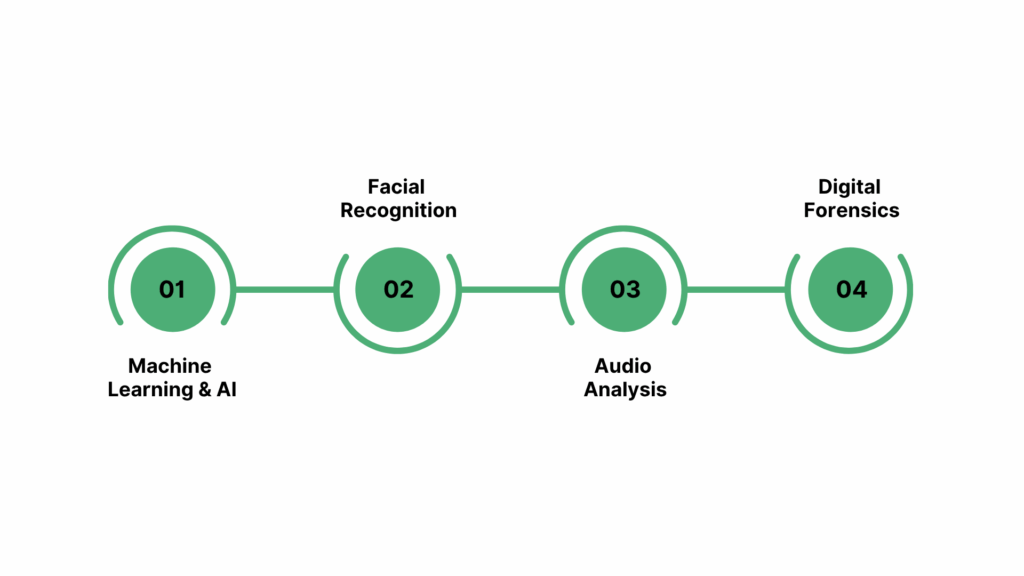

Machine Learning & AI

The backbone of deepfake detection is machine learning, where AI models are trained on large datasets to recognize patterns in media files. These models are taught to identify even the smallest inconsistencies between real and fabricated content, such as unnatural blinking patterns, inconsistent lighting, or irregularities in voice synthesis.

Detection tools like Resemble AI’s DETECT-2B leverage AI algorithms to compare the deepfake with known features of real human behaviors and media. Over time, these algorithms become better at spotting subtle manipulations that may go unnoticed by human viewers.

Facial Recognition

Facial recognition plays a vital role in detecting deepfakes, especially in videos. Deepfake generators often struggle to replicate the fine details of facial expressions and micro-movements that are present in real footage. Detection tools analyze these features for unnatural patterns, such as improper lip-syncing, inconsistent eye movements, or abnormal lighting.

Audio Analysis

For deepfakes that involve voice manipulation, audio analysis is crucial. AI can alter a person’s voice, but the manipulated speech often lacks the natural rhythm, tone, and intonations of real human speech.

Audio analysis tools compare voice characteristics, such as cadence, pitch, and phoneme accuracy, to detect discrepancies. For instance, Resemble AI Audio Intelligence is an advanced AI-powered solution that provides comprehensive, multi-faceted audio analysis that offers deep insights into your content.

Digital Forensics

Digital forensics focuses on analyzing the metadata, compression artifacts, and other file characteristics of a media file. Deepfake videos and images often contain hidden clues in their metadata that can reveal if the content has been altered. Compression methods used by video and image manipulation software may also introduce artifacts that are typically absent in authentic media.

Did you know that DeepFakes can be anywhere, even while you are in a meeting?

As the deepfake-technology landscape continues to evolve, our detection tools must evolve as well. Let’s explore the latest innovations in this field.

Essential Tools & Technologies for Deepfake Detection

The rapid growth of deepfake technology has spurred the development of advanced detection tools designed to identify manipulated media. These tools leverage a combination of machine learning, facial recognition, and digital forensics to analyze and detect deepfakes.

Resemble AI: Leading the Charge in Deepfake Detection

Resemble AI offers a suite of cutting-edge tools and technologies that are specifically designed to detect, analyze, and prevent deepfake threats across a range of media formats. It offers one of the most sophisticated multimodal detection platforms, capable of analyzing deepfake content across audio, video, and images in real time.

Its flagship DETECT-2B model leverages Mamba-SSM (State Space Models) and self-supervised learning, enabling it to capture long-range temporal dependencies and identify subtle anomalies in synthetic media. This advanced architecture ensures cross-modal consistency checks, such as aligning lip-sync with voiceprint, to guarantee accurate detection even in challenging environments.

In benchmark tests, DETECT-2B has achieved 94–98% precision across 30+ languages and multiple media formats, outperforming traditional CNN and RNN-based models, which often struggle with adversarial perturbations.

Key Capabilities:

- Ultra-Low Latency Detection: With the ability to process both video frames and audio streams in under 300 ms, Resemble AI is ideal for live conferencing, broadcast monitoring, and fraud prevention in real-time communications.

- PerTH Watermarking: Embeds perceptually hidden watermarks into AI-generated speech and video, ensuring provenance and providing tamper-resistant verification without compromising media quality.

- Cross-Modal Verification: Matches voiceprints, facial biometrics, and metadata to detect discrepancies, a vital feature for spotting high-quality deepfakes where individual signals may seem authentic.

- Audio Intelligence: Analyzes audio for anomalies, synthetic voice patterns, and other subtle manipulations to identify deepfake or spoofed audio content.

- Deepfake Detection for Meetings: Monitors live video and audio streams within video conferencing platforms, preventing AI-generated impersonations and securing real-time communication during critical interactions.

- Security Awareness Training: Provides tools and simulations to educate employees on how to identify deepfakes and understand associated risks, thereby enhancing organizational cyber resilience.

- API-First Architecture: Offers REST and WebSocket APIs for seamless integration into IVR systems, content moderation pipelines, video conferencing platforms, and forensic tools.

Best For: Enterprises, media outlets, and digital platforms requiring real-time, multimodal deepfake detection, traceability, and compliance readiness to defend against synthetic voice and video fraud, reputational attacks, and misinformation campaigns.

Open-Source Deepfake Detection Tools

Now, let’s look at the top open-source deepfake detection tools.

DeepFaceLab

An open-source platform that allows developers to create and detect deepfakes. DeepFaceLab is primarily used for training deepfake detection models, but it also includes tools that help users detect deepfake content by analyzing facial features and movements in videos.

It’s widely used in academic research and by cybersecurity professionals for analyzing potential threats in media.

FaceForensics++

This project provides a dataset and various tools for detecting facial manipulations in videos. Researchers use FaceForensics++ to train models on deepfake detection by analyzing facial movements, reflections, and other subtle cues that are difficult for deepfake generators to replicate accurately.

It’s an essential resource for academic institutions and security researchers working on advanced detection technologies.

Real-Time Detection Platforms

Here are two more deepfake detection platforms that catch deepfakes in real time.

Intel FakeCatcher

Intel has developed a real-time deepfake detection platform called FakeCatcher. Unlike traditional methods that rely on detecting visual inconsistencies, FakeCatcher analyzes subtle changes in the blood flow beneath the skin, a feature that deepfake generation typically misses.

This cutting-edge approach makes FakeCatcher highly effective at detecting even the most sophisticated deepfakes.

Deepware Scanner

This tool scans videos and images in real time, looking for typical deepfake markers such as mismatched lighting, odd eye movement, or imperfect speech synchronization. It is specifically designed to cater to security firms and organizations that require fast, accurate deepfake detection on a large scale.

While the tools and technologies for deepfake detection are becoming more advanced, the fight against deepfakes is ongoing. Now, let’s dive into some of the key challenges faced in deepfake detection.

The Major Challenges in Deepfake Detection

Despite the significant advancements in deepfake detection, several challenges remain in identifying manipulated media. Let’s explore some of the main challenges in detecting deepfakes:

Evolving Technology

Deepfake creation technology is evolving rapidly, and the AI algorithms behind it are becoming more accurate. This means that deepfakes are increasingly difficult to spot, as creators continuously refine their methods to produce more convincing fakes. To stay ahead, deepfake detection tools must also evolve.

High-Quality Fakes

As deepfake technology advances, some deepfakes have reached a level of quality that makes them nearly indistinguishable from authentic media. This includes high-definition videos, realistic facial expressions, and lifelike voice synthesis.

These high-quality fakes present a significant challenge for detection, as the visual and auditory discrepancies that once helped identify manipulated content are becoming harder to spot.

For example, some deepfake creators are now able to mimic voice patterns with incredible precision, making it difficult to detect audio manipulations. Similarly, the visual imperfections in the eyes, skin tones, and lighting that used to be telltale signs of a deepfake are becoming more refined.

Resource-Intensive Process

Another significant challenge in deepfake detection is the computational resources required to accurately identify manipulated media. Advanced deepfake detection methods, especially those based on machine learning and AI, can be resource-intensive, requiring significant computational power. For instance, analyzing high-resolution videos and running deep learning algorithms on large datasets demands powerful servers and high-end GPUs.

Moreover, the expertise needed to develop and implement these detection methods adds another layer of complexity. Organizations must not only invest in powerful hardware but also in specialized personnel who can understand and optimize detection algorithms. For many small businesses and organizations, this makes deepfake detection solutions expensive and inaccessible.

Also Read: Detecting Deepfake Voice and Video with Artificial Intelligence

Best Practices for Safeguarding Against Deepfakes

As the threat of deepfakes grows, it is essential to take proactive steps to protect yourself, your organization, and your audience. Below are some best practices that can help minimize the risk and prevent the spread of misleading or harmful content:

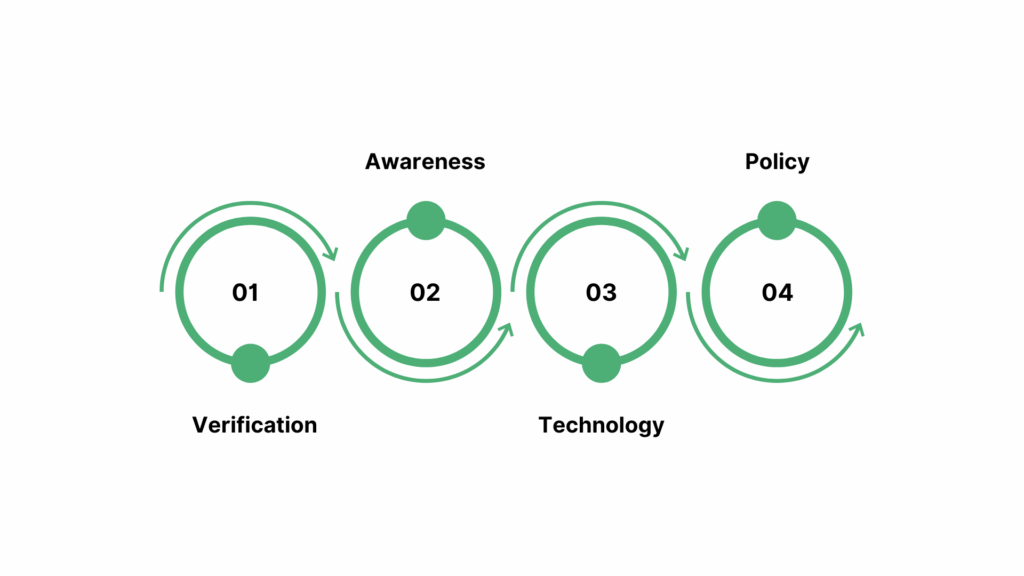

1. Verification: Cross-Check Information from Multiple Sources

The first line of defense against deepfakes is always verification. In today’s digital world, it's crucial to cross-check the authenticity of any media content before trusting or sharing it. This is especially important for videos or images that seem out of context or sensational.

Utilize tools like reverse image searches (e.g., Google Reverse Image Search) and fact-checking platforms (e.g., Snopes, PolitiFact) to confirm the source and credibility of the content. Always verify news or media from multiple reputable sources before sharing it, as deepfakes are often designed to mislead or manipulate viewers.

2. Awareness: Educate Yourself and Others on the Risks

One of the most effective ways to safeguard against deepfakes is awareness. Educating both individuals and teams about the existence of deepfakes and their potential dangers is crucial for preventing the spread of fake content.

This means understanding how deepfakes work but also recognizing the risks they pose, whether it is in terms of misinformation, identity theft, or reputation damage. Workshops, seminars, and company-wide training on deepfake identification can significantly reduce the impact.

3. Technology: Leverage Detection Tools to Ensure Authenticity

Incorporating deepfake detection tools into your workflow can dramatically reduce the chances of encountering manipulated content. Technologies like machine learning, AI-based detection software, and real-time analysis tools can help identify deepfakes before they cause damage.

4. Policy: Advocate for Transparency

As deepfakes become more prevalent, it is important to advocate for regulations that ensure transparency in digital media. Governments and tech companies must work together to implement policies requiring the labeling of AI-generated content. By making it mandatory to clearly label any content that has been altered or created with AI (such as deepfakes), we can prevent its malicious use and ensure accountability.

Additionally, industry-wide standards for deepfake detection and responsible AI usage are vital in establishing trust with audiences. Supporting policies that mandate labeling or watermarking manipulated media can protect consumers from falling victim to deepfakes.

Resemble AI plays a key role in advocating for transparency by offering PerTH Watermarking, which embeds tamper-resistant digital watermarks in audio and video content. This ensures the provenance of media and supports the push for clear labeling of AI-generated content to enhance accountability and consumer protection.

Real-World Applications of Deepfake Detection

Deepfake detection is critical across a variety of industries, each facing unique challenges in preventing the misuse of AI-generated content. Here's how deepfake detection is applied in some of the most impacted sectors:

Journalism: Ensuring Content Authenticity

In the world of journalism, the stakes are high when it comes to ensuring the authenticity of news reports. With deepfakes becoming increasingly sophisticated, they pose a serious threat to the credibility of media organizations.

Deepfake detection tools help journalists quickly verify the authenticity of video and audio content, preventing the spread of fake news that could damage public trust.

Security: Safeguarding Against Identity Theft and Fraud

Deepfakes are also a significant concern in the security sector, where they are increasingly being used in identity theft and fraud. For example, criminals can use deepfake technology to create realistic fake videos or audio recordings that impersonate a person’s voice or appearance. This can lead to financial fraud, blackmail, and other types of scams.

Detection tools in security systems help quickly identify manipulated content, ensuring that video footage and audio recordings used as evidence are genuine.

Entertainment: Maintaining Content Integrity

In movies, TV shows, and digital media, deepfakes can be used to alter performances, create fake celebrity endorsements, or even manipulate plotlines. While creative uses of deepfake technology exist, such as in special effects, there is a growing concern about unauthorized alterations of original content.

Deepfake detection tools in entertainment help ensure that what viewers are watching is the original, unaltered work. They also assist in verifying the authenticity of viral videos or celebrity content, protecting the integrity of films, TV shows, and media from manipulation.

Legal: Verifying Evidence in Courtrooms

In the legal sector, deepfake detection is crucial for verifying the authenticity of evidence. Manipulated videos or audio recordings could be used to falsify witness statements, frame individuals, or mislead juries. The ability to detect deepfakes ensures that courts only rely on genuine media during legal proceedings.

Legal professionals can use detection tools to confirm the legitimacy of videos, audio recordings, and digital images used as evidence in trials. This technology ensures that manipulated content cannot be used to distort justice, protecting individuals and upholding the integrity of the legal system.

Conclusion

As deepfake technology continues to advance, it poses increasing risks across various sectors, from misinformation in journalism to identity theft in security. However, deepfake detection tools have become increasingly sophisticated, providing powerful solutions to identify and mitigate the impact of AI-generated media.

With advancements in AI, machine learning, and facial recognition, we can detect even the most convincing deepfakes and protect individuals, organizations, and society from the harms of manipulated content.

Resemble AI helps you in this mission by providing cutting-edge detection tools that ensure the authenticity of your digital media, helping you stay ahead of deepfake threats.

Caught in a deepfake? Trust Resemble AI to safeguard your voice. Book a demo to explore our detection tools now and stay protected.

FAQs

1. What is a deepfake?

A deepfake is media (image, video, or audio) that has been altered or generated using AI, often to deceive viewers or manipulate information.

2. How can I detect a deepfake?

Detection involves using AI-driven tools to analyze facial features, voice patterns, and metadata inconsistencies in media files.

3. What are the risks associated with deepfakes?

Deepfakes pose significant risks such as misinformation, identity theft, fraud, and harm to reputations.

4. Can deepfakes be used for legitimate purposes?

While deepfakes have legitimate uses in entertainment and education, they can also be misused for malicious purposes.