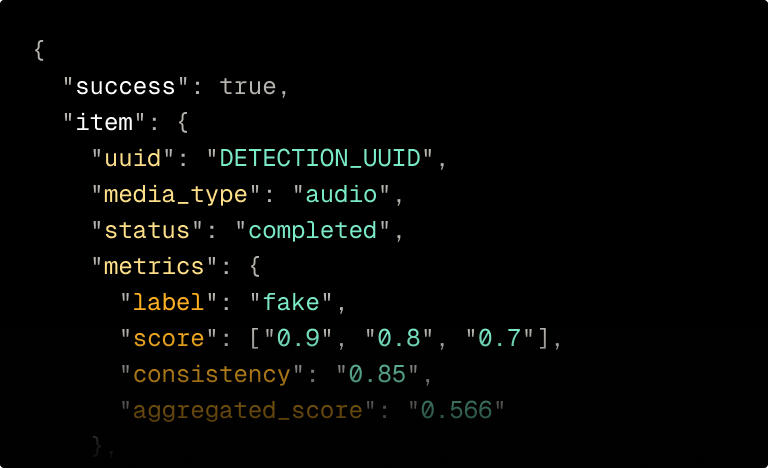

Resemble Intelligence is the explainability layer built to work with DETECT-3B Omni.

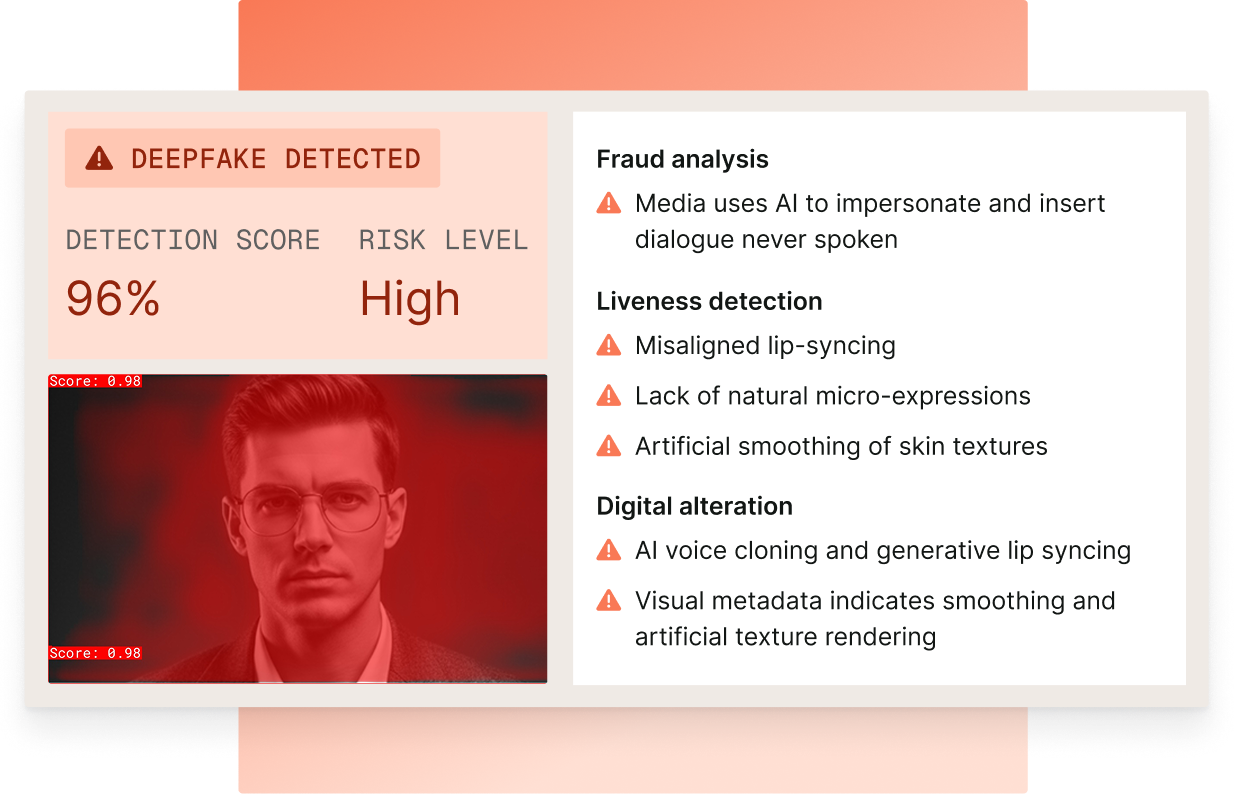

Every detection returns a human-readable forensic breakdown identifying which artifacts triggered the flag, the detected fraud type, and liveness status.

Fraud analysts get the attack type and reasoning. Compliance teams get the audit trail. Legal teams get documentation. Trust and safety teams get the triage signal.

Identifies language, dialect, emotion, and speaking style to establish a behavioral baseline. This provides the human context required for legal and forensic review without the need for prior enrollment or reference audio. No signs of AI generation detected. What you're seeing appears to be real.

Names specific acoustic and visual artifacts, such as unnatural prosody, timbral inconsistencies, lip-sync irregularities, and skin texture anomalies. The report identifies exactly what was found, not just that a flag was triggeredSynthetic content identified. Check the breakdown for what the model detected and why.

Extracts the core message intent and provides a transcription of the dialogue. Intelligence analyzes the narrative context of the interaction to document exactly what was said and the intent behind the communication.No confident conclusion. Common with heavily compressed or low-quality media.

Determines liveness to confirm if a real person was physically present during capture. This includes anti-cheating detection, screening for behavioral indicators like neutral affect or eye movements.

Attack types and vectors are identified with confidence reasoning, covering virtual kidnapping, executive impersonation, and account takeover. Analysts get a full fraud analysis explaining the intent and confidence level of the detected threat.

Flags post-capture manipulation, such as editing, splicing, or tampering, even when the content is not AI-generated. It also checks for misinformation and disinformation, identifying staged recordings or synthetic background replacements.

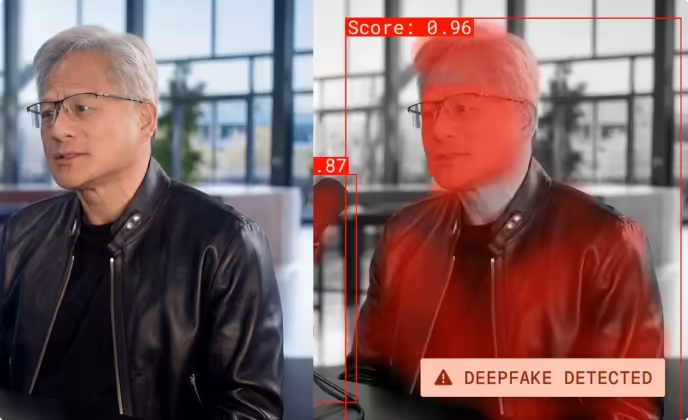

The abbreviated example below is drawn from a real detection run against a video showing President Trump walking unsteadily out of what appeared to be the Walter Reed National Miliatry Medical Center. The video was scored as synthetic and the audio as real.

Deformed hands, fingers merging into fabric, distorted facial features, significant lighting inconsistencies, overly smoothed skin, inconsistent signage using an unofficial logo and gibberish text

Assessment: Deepfake

Video lacks biometric consistency, structural failures in the rendering of hands and background faces are definitive indicators of generative AI

Assessment: Not real person

Assessment: Fully synthetic AI-generated video

The video is AI-generated. It does not depict a real event, confirmed by fact-checkers and official sources.

The ideo identified as 100% AI-generated. The use of a deepfake to impersonate a public figure or fabricate a scenario is a definitive indicator of cheating and high-level deception.

This is a synthetic media file created to impersonate a former president in a compromising medical state. It is designed to spread misinformation regarding his health for political influence or to incite public concern.