Clone a voice once and hold it across 20+ languages. Every output is watermarked by default.

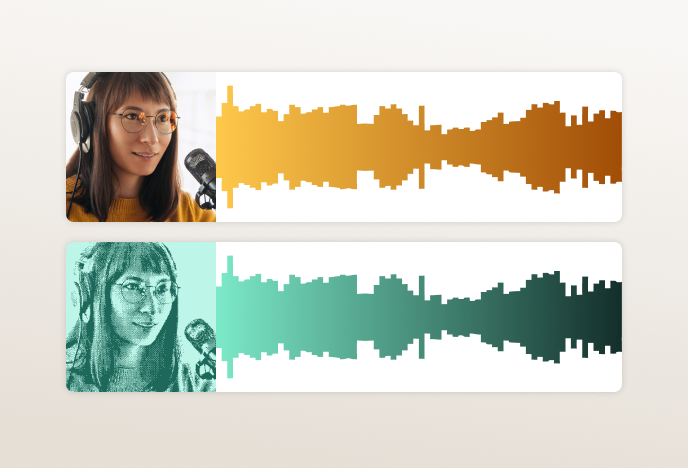

Most multilingual TTS models prioritize language count over output quality. Clone a voice in English, switch to Spanish, and the accent drifts, the rhythm changes, the speaker sounds like someone else.

The speaker sounds like themselves across every language.

Provide 10 seconds or more of reference audio. Chatterbox V3 captures voice identity, including timbre, accent, and rhythm, and holds it across every target language.

One model. No rebuilding per market.

Submit text in any of the 20+ supported languages. Chatterbox V3 synthesizes speech in the cloned voice with no separate model, training run, or vendor per language.

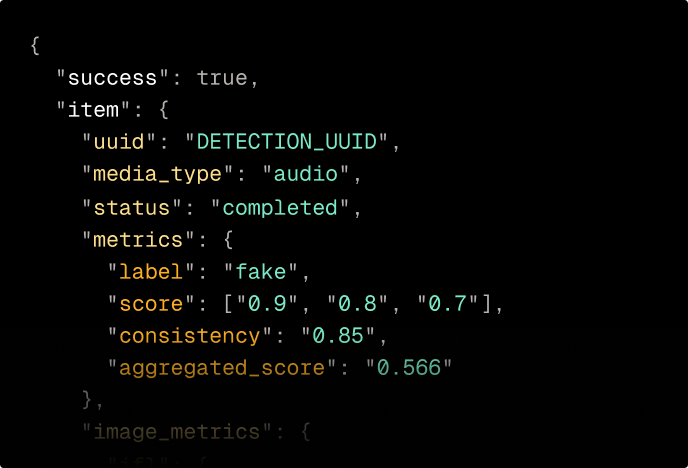

Every clip is watermarked before it leaves the model.

PerTh watermarking is embedded at generation, imperceptible to listeners, persistent through re-encoding, and verifiable on demand.

The cloned voice stays the cloned voice.

Voice identity and accent hold more consistently across language switches. Switch from English to Arabic or Japanese and the speaker still sounds like themselves.

The model says what you gave it.

Less unwanted continuation, repetition, and off-prompt speech — a persistent problem in earlier multilingual models, especially on longer inputs.

Sounds like it was meant to be said.

Better rhythm and delivery across all supported languages, optimized for voice agents and conversational AI where flat output breaks the experience.

Multilingual by default. Production-ready by design.

When a specific language needs tighter quality control, stronger dialect behavior, or regional pronunciation accuracy, the Single Language Pack provides a purpose-built model for that language.

Six languages. Single Language Pack samples generated from dedicated per-language models.