The only open-source TTS with built-in watermarking. Up to 6× faster than real-time on a GPU, with paralinguistic prompting, zero-shot cloning, and MIT license.

Outperforms proprietary closed-source models head-to-head. Same prompts, same reference audio — zero-shot, no prompt engineering, no post-processing.

Chatterbox Turbo is the first open-source TTS that doesn’t ask you to choose your fighter. It’s fast, expressive, and MIT licensed — with every output authenticated by PerTh watermarking, so you can build voice AI that’s both open and accountable.

Streaming-ready inference for voice assistants, interactive media, and low-latency agent loops.

Lean model size — alignment-informed generation keeps latency tight without sacrificing quality.

Zero-shot cloning from a few seconds of reference audio. No training run, no fine-tune required.

Everything you expect from a modern TTS model — and a few things no other open-source model ships with.

First open-source model with emotion exaggeration control. Adjust intensity from monotone to dramatically expressive with a single parameter.

Faster-than-realtime inference with alignment-informed generation. Perfect for real-time applications, voice assistants, and interactive media.

Clone any voice with just a few seconds of reference audio. No training required. Includes easy voice conversion scripts.

Built-in PerTh watermarking on every generated audio. Know when content was created by Chatterbox while maintaining high audio quality.

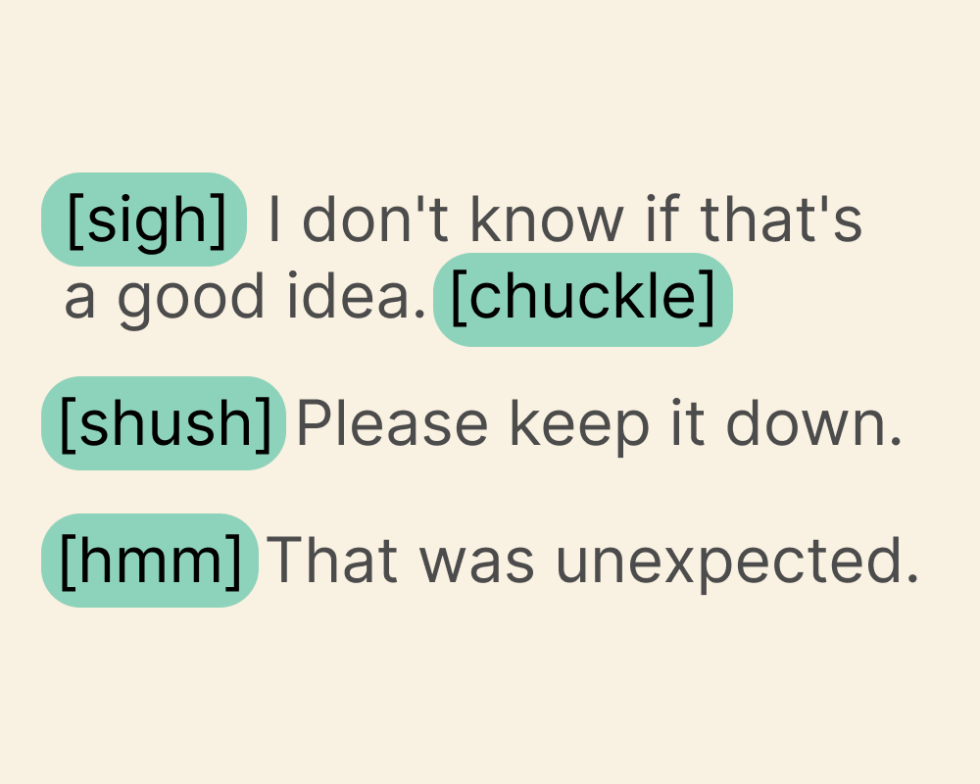

Text-based tags that tell the model to perform natural vocal reactions in the cloned voice. Supported tags include sigh, gasp, cough and more.

A single pip install, comprehensive docs, and reference code. Built by developers, for developers — available on GitHub and Hugging Face.

We ran a test through Podonos comparing Chatterbox Turbo against ElevenLabs Turbo v2.5, Cartesia Sonic 3, and VibeVoice 7B. All systems produced audio from 5–10 second reference clips and identical text inputs — zero-shot, no prompt engineering.

Voice AI that sounds human — complete with reactions and emotions expressed in sighs, laughs, and more.

Chatterbox Turbo introduces paralinguistic prompting: text-based tags that tell the model to perform natural vocal reactions in the cloned voice.

The model performs these reactions naturally, in the same cloned voice, with the same emotional tone — no post-processing, no splicing, no manual audio editing.

Every audio file generated by Chatterbox includes Resemble AI’s PerTh (Perceptual Threshold) Watermarker — a deep neural network that embeds data in an imperceptible, difficult-to-detect way. This isn’t just a feature; it’s our commitment to responsible AI deployment.

The watermarker operates on principles of psychoacoustics — exploiting the way we perceive audio to find sounds that are inaudible, and then encoding data into those regions.

The result: authenticated audio that sounds unchanged to humans but stays traceable for detection, provenance, and incident response.

Learn more about PerTh →A single install, a permissive license, and two distribution channels. Pick your path — the model is the same on all of them.

Try Chatterbox Turbo inside Resemble AI — no install required. Use the hosted playground to test voices, tune emotion, and ship straight to production.

Use on Resemble AI →MIT-licensed source, reference scripts, and voice conversion tools. Clone the repo, read the docs, open a PR.

View repo on GitHub →Weights hosted on Hugging Face for fast pulls, versioning, and Spaces integration. Use with transformers or pin a revision.

$ pip install chatterbox-tts

Quick answers on licensing, performance, and what’s in the box.

[sigh], [gasp], [cough], and [laugh] that the model performs in the cloned voice with matching emotional tone. No splicing or manual editing required.