The Deepfake Watchlist is Resemble AI's weekly surveillance of synthetic media incidents, ongoing cases, and disputed content shaping the news cycle. Each week we track confirmed incidents, emerging attack vectors, and claims under investigation, alongside the provenance, detection, and policy threads running underneath them.

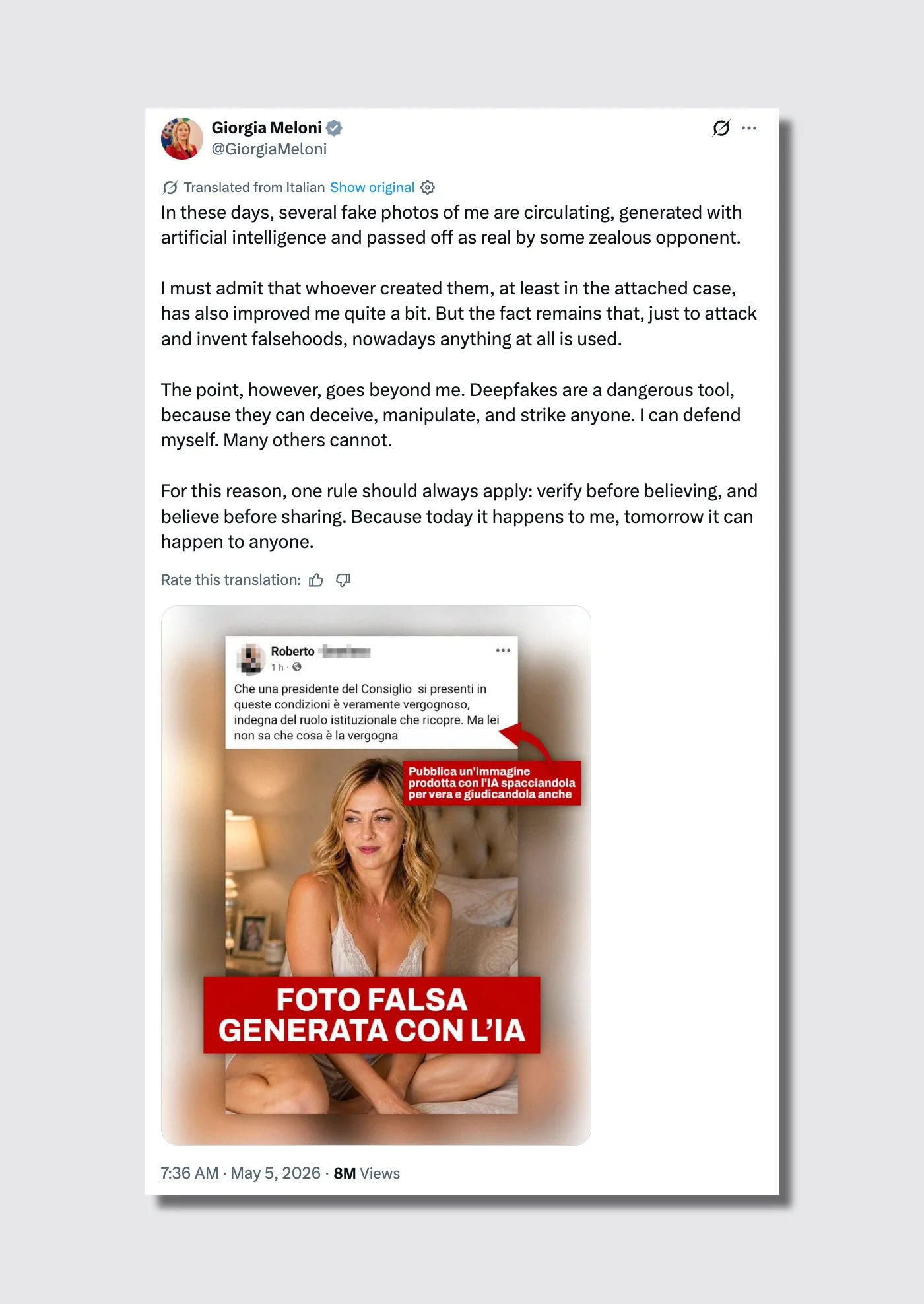

★ Featured: Giorgia Meloni's AI lingerie deepfake and what she said about it

Italian Prime Minister Giorgia Meloni publicly denounced the circulation of an AI-generated image showing her in bed in lingerie on May 5, sharing the fabricated image on her own Facebook account alongside a post from a user named Roberto who had called her "shameful" for it, confirmed as AI-generated by Reuters and AP.

- Category: CSAM / NCII

- Type: Attack

- Modality: Image

- Policy / Regulatory: Italy has no dedicated AI deepfake statute; Meloni's prior 2024 lawsuit over pornographic deepfakes was brought under defamation law, and the current incident has not yet been formally reported to police.

- Trend: Female political leaders targeted with sexualized synthetic imagery as a tool of political delegitimization, distinct from standard electoral disinformation because the goal is reputational damage rather than voter manipulation.

- Attack vector: AI-generated still image fabricated to resemble an intimate personal photograph, circulated on social media with an accompanying narrative designed to provoke public shame.

- What we saw in the content: The image carries the forensic signatures we train DETECT-3B Omni to flag, for example:

- Skin texture has the over-smoothed, uniform quality that distinguishes AI-generated portraiture from photographic grain.

- Fabric rendering on the lingerie shows the flat, slightly repetitive patterning typical of diffusion model outputs rather than natural textile variation under light.

- Hair near the face has the characteristic soft halo effect produced when AI generators feather the boundary between subject and background.

- Proportional geometry at the wrists and hands shows subtle distortion, a persistent artifact in full-body AI image generation.

Meloni’s handling of the image provides a blueprint for political counter-messaging. Rather than ignoring it, she posted the fake herself, named the person who had weaponized it, and said something precise: "Deepfakes are a dangerous tool because they can deceive, manipulate and target anyone. I can defend myself. Many others cannot." In 2024, she sued two men who had posted pornographic deepfakes of her on a US porn site, seeking €100,000 in damages which she donated to a domestic violence fund. That prosecution took four years. The current image circulated for days with no confirmed platform removal.

The EU's AI Act amendment agreed this week, which explicitly bans AI systems that generate sexualized deepfakes, addresses the supply-side problem directly. But between a law passing and enforcement that reaches individual victims, there is a gap measured in years, and the Meloni case is what living inside that gap looks like.

1. Inside Haotian AI, the Chinese real-time deepfake software sold to scammers

404 Media's 'HELLO BOSS': Inside the Chinese Realtime Deepfake Software Powering Scams Around the World, published May 7, documents reporter Joseph Cox's investigation into Haotian AI, a piece of Chinese-developed real-time deepfake software marketed openly to scammers, which Cox obtained a copy of after weeks of contact with sellers, and which he used to swap his face for another person's in a live Microsoft Teams call with full lip-sync and without the illusion breaking.

- Category: Fraud / Impersonation

- Type: Attack

- Modality: Video

- Policy / Regulatory: Haotian AI operates outside US or EU jurisdiction; the software is distributed through Chinese-language channels linked by 404 Media to money laundering networks, creating significant enforcement obstacles for Western law enforcement.

- Trend: Real-time deepfake fraud tools moving from specialized criminal markets to consumer distribution, with explicit platform integrations for Zoom, Teams, WhatsApp, TikTok, Instagram, and YouTube.

- Attack vector: Live video call face-swap enabling a fraudster to impersonate any target in real time, including demonstrated use cases of impersonating US law enforcement.

This is the most consequential investigative piece of the week because it documents something the security community has been warning about for two years: real-time deepfake software that works in production, is available to buy, and is already being used against real targets. Cox tested Haotian AI on a live Teams call and watched another person's face become his in real time, including stubble, expression, and eye movement. The software integrates natively with Zoom, Teams, WhatsApp, TikTok, and Instagram, and its sellers demonstrate use cases including impersonation of US law enforcement. 404 Media linked it to Chinese money laundering networks, suggesting the tooling and the financial infrastructure for this fraud category are already integrated.

The CBS Chicago story this week, a suburban man losing $69,000 to a scammer using an AI-generated US Marshals badge on a video call, is the audio-only version of this attack. Haotian AI is what it looks like when it goes live video. Platform-level liveness detection is the obvious defensive response, and it is being developed, but Haotian AI is built to defeat those checks, and the software is improving faster than detection is.

2. EU strikes deal to ban AI systems that generate sexualized deepfakes

Reuters reporting from May 7 confirmed that European Parliament negotiators and EU member state governments agreed on May 7 to amend the AI Act to explicitly ban AI systems that generate sexualized deepfakes, with MEP Michael McNamara telling AFP "Today the EU has drawn a red line," while separately agreeing to delay high-risk AI enforcement from August 2026 to December 2027 for standalone systems and August 2028 for embedded systems. The August 2026 deadline for Article 50 transparency and labeling obligations is separate from the high-risk enforcement rules, and remains unchanged.

- Category: CSAM / NCII

- Type: Response

- Modality: Image, Video

- Policy / Regulatory: The ban on nudifier applications will be incorporated into the AI Act as a prohibited practice; the delay to high-risk AI enforcement was a negotiated tradeoff proposed by the Commission to reduce compliance burden on European businesses.

- Trend: European regulators moving from platform-level content moderation demands to supply-side prohibition of the tools themselves, directly in response to the Grok/xAI mass sexualized deepfake incident of early 2026.

- Attack vector: AI image and video generation systems with nudification capabilities, distributed as consumer applications and API services.

The Grok/xAI incident that triggered this amendment, in which xAI's chatbot generated millions of sexualized images including apparent depictions of minors, was documented in the Deepfake Watchlist: Week of April 20, 2026. The EU's response time, from incident to agreed amendment, was roughly four months, unusually fast for EU legislative action and reflecting how politically radioactive the Grok situation became across member states.

The strategic pivot here is clear. While high-risk AI enforcement gets pushed back 16 months for standalone systems, the transparency obligations of Article 50, including labeling requirements for AI-generated content, remain on the August 2026 schedule. This suggests a tiered enforcement philosophy: the EU intends to mandate transparency immediately and ban the most egregious tools without waiting, and give industry a longer lead time on the broader safety compliance framework.

3. Kash Patel's FBI video appears to have used AI to recreate the Beastie Boys' "Sabotage"

NPR's analysis from May 5 found that at least six clips in an FBI promotional video posted by Director Kash Patel on May 4 were frame-by-frame AI recreations of shots from the Beastie Boys' 1994 Spike Jonze-directed "Sabotage" music video, with Bellingcat researcher Kolina Koltai confirming the video was "highly likely" AI-generated, and UC Berkeley professor Hany Farid writing that "the similarities are hard to explain otherwise."

- Category: Brand / Likeness

- Type: Attack

- Modality: Video

- Policy / Regulatory: Neither the Beastie Boys nor the FBI responded to NPR's request for comment; no copyright claim has been filed, and enforcement against a federal agency would face significant jurisdictional complexity.

- Trend: US government officials using AI-generated content to recreate copyrighted creative works for promotional purposes, consistent with a pattern of the current administration co-opting popular culture without authorization.

- Attack vector: Image-to-video AI model fed screenshots from the original "Sabotage" music video, producing near-identical synthetic clips with characteristic AI artifacts, including a distorted license plate reading "No Fraud" in the opening shot.

The forensic tells are detailed in NPR's analysis: in one shot where a car spins out, window grilles visible in the original are missing in the FBI version, consistent with an image-to-video model reconstructing a scene from a still frame. The "No Fraud" license plate shows characteristic AI text rendering artifacts, a particular irony given the post was about fraud investigations. Hany Farid's assessment is precise: the clips were most likely created by feeding screenshots from the original video into an image-to-video model, and "the similarities are hard to explain otherwise."

The broader context is that the Trump administration has repeatedly used AI content co-opting artists' work over their explicit objections. This adds a new dimension: a federal agency, using public resources, apparently generating AI recreations of copyrighted creative works without license or disclosure. The Beastie Boys have historically been litigious about unauthorized use of their music, and "Sabotage" is directed by Spike Jonze, whose visual style is itself a form of intellectual property.

4. Spencer Pratt's Batman AI campaign ad goes viral with 4.1 million views

NBC News reported on May 6 that an AI-generated video depicting Los Angeles mayoral candidate Spencer Pratt as a Batman-style superhero saving the city from a cast of villains including Mayor Karen Bass, Governor Gavin Newsom, and Kamala Harris went viral with over 4.1 million views on X, produced using Seedance 2.0 by filmmaker Charles Curran and reposted by Pratt on May 5, the night before a scheduled televised debate.

- Category: Political / Electoral

- Type: Attack

- Modality: Video

- Policy / Regulatory: California's AB 2839, which would have required disclosure of AI-generated content in political advertising, was blocked by a federal judge in 2024 on First Amendment grounds; no disclosure requirement currently applies to this content.

- Trend: AI-generated political video migrating from disinformation concern to deliberate satirical campaign tactic, with the candidate openly endorsing and amplifying the synthetic content.

- Attack vector: AI video generation using Seedance 2.0 to create political satire that depicts real public officials in fabricated scenarios, distributed via X without AI disclosure labeling.

It is openly AI-generated, amplified by the candidate himself, and explicitly satirical. By the most straightforward read it does not fit the pattern of synthetic media used to deceive. What it does do is fabricate video depictions of sitting public officials, including the governor of California and a former vice president, in scenarios they did not consent to, using tools indistinguishable from real video at first glance.

By the time the debate cameras turned on, the video had already racked up 4.1 million views, effectively front-running the official political discourse. This underscores a structural shift we’ve been tracking: synthetic spectacle now routinely reaches a larger audience in hours than a substantive policy discussion can reach in a night. Whether it’s a fringe candidate or a state actor behind the tools, the result is a captured news cycle where the AI narrative outpaces the actual event.

Honorable mentions

The Atlantic, Deepfakes Are Coming for Your Bank Account, May 2. Reporter Lila Shroff used ChatGPT Images 2.0 to generate over 100 fraudulent documents including fake IDs, passports, opioid prescriptions, and bank alerts, finding that the model's newly solved text-rendering capability produces forgeries convincing enough to deceive a hotel receptionist or healthcare provider. The text-in-image limitation was itself a detection signal. That signal is now gone.

Axios, Doctors' growing AI deepfakes problem, May 6. The American Medical Association (AMA) formally called on federal and state lawmakers to close legal gaps around AI deepfakes of physicians used to sell fraudulent health products, with AMA CEO John Whyte saying "we shouldn't have to make the public detectives to determine whether something's not a deepfake."

Koreaboo, BTS "intimate" photos TikTok trend sparks massive outrage, May 6. Hundreds of TikTok videos depicting BTS members V and Jungkook in AI-generated intimate scenarios went viral, with creators posting step-by-step tutorials. #HYBEProtectJungkook trended as fans demanded action from the group's agency HYBE. The same tools, the same category of harm, no platform enforcement distinguishing fan-created from commercially distributed.

CBS Chicago, Suburban man loses $69K to scammer using AI-generated US Marshals badge, May 6. A Chicago-area man lost $69,000 to a scammer who displayed an AI-generated law enforcement badge on a video call and told him he faced arrest. The victim's son said his father was too embarrassed to show his face. This is the documented civilian cost of the attack vector that Haotian AI industrializes.

The pattern

- Three of this week's stories, Haotian AI, the Meloni deepfake, and the Patel FBI video, come from completely different categories and geographies, but they share a structural condition: the tools used to produce the harm are consumer-grade, widely available, and improving faster than any institutional response. Haotian AI costs a few hundred dollars and works on Teams. ChatGPT Images 2.0 is a subscription product. The AI model used to fabricate a lingerie image of a head of government is the same class of tool used to generate birthday card illustrations. The democratization of these tools is not a future concern, it is the current operating environment, and the Meloni and CBS Chicago stories together document what that looks like at the individual level.

- The EU's agreement this week to ban nudifier applications is the most direct supply-side response in the Watchlist's short history. It directly addresses what the Meloni deepfake, the BTS content, and the Grok/xAI incident of early 2026 all represent: a class of AI tool whose primary function in practice is producing non-consensual sexualized imagery. The delay to high-risk AI enforcement is a real concession to industry, and the gap between drawing a legal line and enforcing one across borders remains the practical challenge. But the direction of travel in European AI governance is now clearly toward prohibition of the most harmful tool categories, not just platform-level content moderation.

- The Pratt AI campaign ad and the Patel FBI video both normalize the use of synthetic content in institutional and political communication without disclosure, and both landed in the same week that the EU agreed on a transparency labeling framework. That tension, between normalized non-disclosed AI use in the US and mandatory labeling obligations taking effect in Europe in August, is a thread the Watchlist will be tracking closely over the next three months. August is no longer far away.

Watching next week

- Haotian AI law enforcement response. Now that 404 Media has named and documented the software and its links to Chinese money laundering networks, watch for whether US or EU law enforcement moves against the distribution infrastructure.

- EU AI Act Article 50 countdown. Transparency labeling obligations remain on schedule for August 2026; watch for platform compliance documents and any early enforcement signals from the AI Office.

- Musk/xAI Paris prosecution. Still open with no resolution; the French prosecutor's investigation into Grok deepfake content on X continues regardless of the Musk v. Altman trial in California.

- AMA legislative push. Whether the formal AMA call for legislation produces any concrete bill introduction in the coming weeks.

The Deepfake Watchlist publishes every Friday. Subscribe to receive bi-weekly updates in your inbox, or follow Zohaib Ahmed on LinkedIn for the weekly social companion. Track every documented incident in the Resemble Deepfake Incident Database, and read the full methodology in our 2025 Deepfake Threat Report.