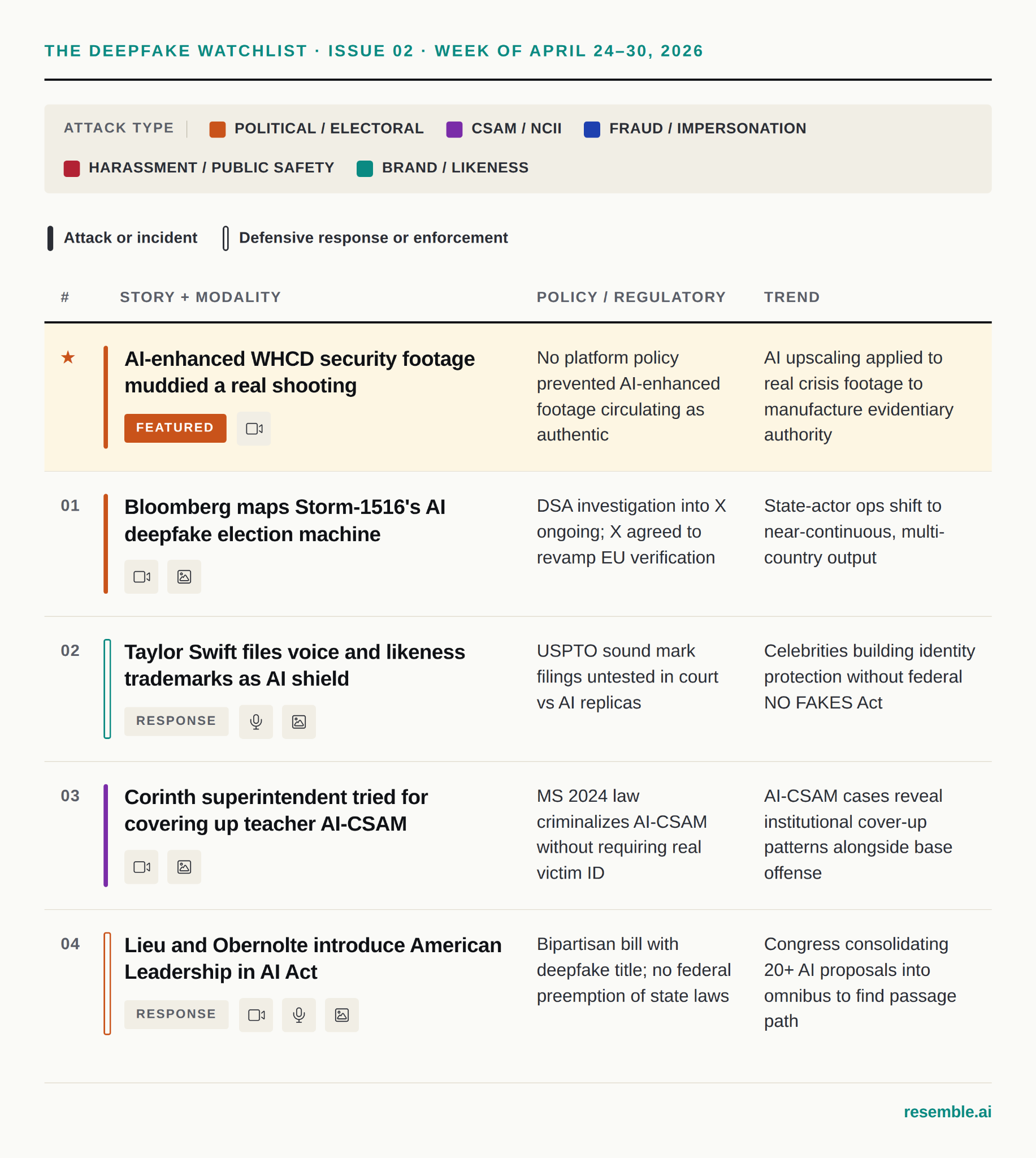

The Deepfake Watchlist is Resemble AI's weekly surveillance of synthetic media incidents, ongoing cases, and disputed content shaping the news cycle. Each week we track confirmed incidents, emerging attack vectors, and claims under investigation, alongside the provenance, detection, and policy threads running underneath them.

★ Featured: The AI-enhanced WHCD security footage that muddied a real shooting

Following the April 25 shooting at the White House Correspondents' Dinner, Lead Stories confirmed on April 27 and PolitiFact independently verified on April 29 that a Facebook account posted an AI-enhanced version of authentic CCTV footage, falsely claiming it was "unedited raw security footage" of the suspected shooter — the enhanced clip carried a visible CapCut AI watermark and introduced artifacts not present in the original footage published by President Trump on Truth Social that same night.

- Category: Political / Electoral

- Type: Attack

- Modality: Video

- Policy / Regulatory: No platform policy prevented AI-enhanced footage from circulating as authentic; the CapCut watermark served as the primary forensic anchor for debunking, not any platform-level disclosure requirement.

- Trend: AI upscaling tools are being applied to genuine low-quality footage to manufacture the appearance of evidentiary authority, a layer distinct from fully synthetic generation.

- Attack vector: AI video enhancement via CapCut applied to authentic CCTV footage, then recirculated on Facebook with a misleading caption claiming it was unedited original material.

- What we saw in the content: The video carries the forensic signatures we train DETECT-3B Omni to flag, for example:

- The CapCut AI watermark is visible in the top-left corner throughout the clip, a direct indicator the footage was processed through an AI enhancement tool.

- Text on a Secret Service agent's jacket degrades into gibberish mid-clip, a common artifact of AI upscalers reconstructing text from insufficient pixel data.

- A man's headwear transitions from a cap to a hat within a single continuous shot, indicating frame-by-frame inconsistency introduced by the AI enhancement model.

- An agent appears to kneel spontaneously in front of a colleague in the background with no operational logic, consistent with AI hallucination of human posture across frames.

What makes this week's Featured Fake unusual is that the underlying footage is real. The shooting on April 25 was a genuine event, and the original CCTV clip was published by President Trump on Truth Social that same night. What circulated on Facebook the following day was not a fabrication of an event that didn't happen, it was a fabrication of evidence for an event that did. Someone ran authentic low-resolution security footage through CapCut, produced a sharper-looking version with the watermark still in frame, and posted it as "unedited raw security footage" in a news environment where millions of people were scanning social media for confirmation of what happened.

The four forensic artifacts are clearly observable and documented in the Lead Stories fact-check with screenshots: the CapCut watermark, the jacket text rendering to gibberish, the headwear change mid-shot, the agent kneeling for no reason. All four are artifacts of an AI upscaler trying to reconstruct detail from pixels that don't contain it. The WHCD case was caught partly because the perpetrator was careless, the watermark stayed in, and Trump's Truth Social post provided a baseline. A more careful actor, running the same enhancement with a cleaner tool, produces a much harder detection problem. Provenance at the point of sharing, not just forensic analysis after the fact, is what closes that gap.

1. Bloomberg maps Storm-1516's AI deepfake election machine

Bloomberg's Russia's Disinformation War Floods Social Media With Dangerous False Claims, published April 27, documents Storm-1516, a GRU-backed influence operation that more than doubled its AI-deepfake output in Q1 2026 compared to the same period in 2025, targeting elections in Hungary, Armenia, and Moldova with synthetic video content, including one fabricated clip depicting Hungarian opposition leader Péter Magyar that accumulated 2.7 million views before Euronews debunked it.

- Category: Political / Electoral

- Type: Attack

- Modality: Video, Image

- Policy / Regulatory: The EU's Digital Services Act investigation into X's handling of Storm-1516 content is ongoing; many of the most prolific accounts remain active with blue-check status.

- Trend: State-actor synthetic media operations are moving from episodic election interference to near-continuous, multi-country output.

- Attack vector: AI-generated video impersonating news broadcasts and whistleblowers, distributed through paid blue-check accounts on X and amplified by Prigozhin-linked influencer networks.

The Bloomberg investigation gives us the clearest picture yet of what a mature state-actor synthetic media operation looks like in 2026. Storm-1516 is not running occasional influence operations around elections, it is producing near-daily content across multiple countries simultaneously, with a playbook consistent enough to be tracked and attributed. The Q1 2026 output more than doubled year-over-year, which is the scaling curve of a system that has been professionalized and resourced.

The failure mode is instructive. The Magyar video got 2.7 million views before Euronews debunked it, and the debunk reached a fraction of that audience. In a closer race that asymmetry would have been the story. Bloomberg's analysis also notes that many of Storm-1516's most prolific X accounts remain active and anonymous, with blue checks that prioritize their content in the algorithmic feed, meaning the amplification infrastructure is still largely intact.

2. Taylor Swift files voice and likeness trademarks as AI shield

Variety's reporting from April 27 confirmed that on April 24, Taylor Swift's company TAS Rights Management filed three trademark applications with the USPTO — two sound marks covering short spoken phrases in her voice and one visual mark covering a signature stage image — in filings that trademark attorney Josh Gerben described as "specifically designed to protect Taylor from threats posed by artificial intelligence."

- Category: Brand / Likeness

- Type: Response

- Modality: Audio, Image

- Policy / Regulatory: Trademark law has no settled precedent for protecting a celebrity's spoken voice against AI-generated replicas; the USPTO review process will take months and any enforcement would be untested in federal court.

- Trend: Celebrities building federal identity protection infrastructure through trademark registration in the absence of a passed NO FAKES Act.

- Attack vector: AI voice cloning and image synthesis that mimics a celebrity's appearance and sound without copying any specific copyrighted recording, a gap copyright law does not cover.

The Swift filings are worth paying attention to not because they immediately solve the AI deepfake problem but because they test legal infrastructure that doesn't yet exist at the federal level. Copyright protects specific recordings; it does not protect a voice as a sound. The trademark approach tries to create a legal handle for that gap: if a registered sound mark is "confusingly similar" to an AI-generated replica, there may be a federal infringement claim available. Swift's voice and likeness have already been used without permission in fake political endorsements, pornographic deepfakes, and scam advertisements on Meta's platforms, so the motivation is grounded in documented harms.

The entertainment industry is building its own identity protection infrastructure in the absence of a federal likeness rights framework. The NO FAKES Act has support from OpenAI and YouTube but has not passed. Swift's trademark filings are a legal-system workaround, real and partial in equal measure.

3. Corinth superintendent on trial for covering up teacher's AI-generated CSAM

WLBT's coverage from April 28 confirmed that federal trial began this week for Lee Childress, former superintendent of the Corinth School District in Mississippi, accused of concealing that former teacher Wilson Jones used AI to create sexually explicit deepfake videos of eight female students aged 14–16, instead allowing Jones to resign without alerting authorities.

- Category: CSAM / NCII

- Type: Attack

- Modality: Video, Image

- Policy / Regulatory: The Childress trial tests institutional accountability rather than the underlying generation conduct; Mississippi's 2024 statute removes the prior requirement that prosecutors identify a real victim depicted in actual photographs.

- Trend: AI-generated CSAM cases increasingly revealing institutional cover-up patterns alongside the underlying offense.

- Attack vector: AI image and video generation using student social media photographs, producing sexually explicit synthetic content without any physical contact with victims.

The Corinth case has two distinct dimensions. The first is the offense: a teacher used Hailou AI to generate explicit videos of students from their social media photos. The second is the alleged institutional response: a superintendent who allegedly allowed Jones to resign rather than report the crime, and Jones was then hired by the state child welfare agency. That sequence is what makes this a federal trial rather than a prosecution of the underlying offense alone.

The victims were not filmed, and no physical contact occurred. That has not reduced the documented harm to them or their families. The framing of AI-generated CSAM cases as "victimless" because no physical abuse occurred is not a framing the law accepts, and should not be one we accept either.

4. Lieu and Obernolte introduce bipartisan American Leadership in AI Act

Nextgov/FCW reported on April 27 that Reps. Ted Lieu (D-CA) and Jay Obernolte (R-CA), co-chairs of the House Bipartisan AI Task Force, introduced the American Leadership in AI Act, a consolidated package of more than 20 prior legislative proposals that includes a dedicated title on deterring harmful deepfakes, enhancing legal remedies for victims, increasing penalties for AI-enabled fraud and impersonation, and extending whistleblower protections to employees who report AI-related risks at frontier model developers.

- Category: Political / Electoral

- Type: Response

- Modality: Video, Audio, Image

- Policy / Regulatory: The bill excludes federal preemption of state AI laws, a deliberate choice to avoid the most divisive element of the broader federal AI legislation debate.

- Trend: Congress consolidating fragmented AI proposals into omnibus packages, with bipartisan deepfake victim remedies as the most likely separable element if the full bill stalls.

- Attack vector: Not applicable as this is a legislative response covering the full range of deepfake attack vectors.

The American Leadership in AI Act is notable for what it doesn't do as much as for what it does. By excluding federal preemption, Lieu and Obernolte have threaded the needle on the most contested question in AI legislation right now, with the White House and major tech companies actively lobbying for preemption to replace the state-law patchwork. That design choice gives this bill a better path than most of its predecessors.

The week's lineup makes the timing feel right. A state-level CSAM prosecution, a celebrity testing trademark law as a federal substitute, a GRU influence operation running across multiple elections simultaneously, and a bipartisan bill that could address several of those gaps at once. Whether it gets the floor time is the question.

Honorable mentions

KTRE / East Texas Banner, Buna ISD student accused of creating deepfake explicit picture of fellow student, April 24. Jasper County, Texas deputies arrested 17-year-old Nathaniel Davis after he admitted to using AI to alter a classmate's photo into a nude image and posting it to Snapchat. Davis was charged as an adult under Texas's deepfake NCII statute in what the county sheriff described as the county's first deepfake prosecution.

AFP / France 24, AI fakes of accused US press gala gunman flood social media, April 29. Within hours of Cole Tomas Allen being identified as the WHCD suspect, Facebook was flooded with AI-generated images placing him alongside more than 50 public figures, with fabricated captions claiming he was their "former driver" or "assistant." AFP's investigation found a separate wave of posts dressing Allen in gear for more than 40 sports teams, all apparently generated from the same "teacher of the month" photo. Digital literacy expert Mike Caulfield told AFP: "This looks a lot like the same content farm behavior, just with AI."

Digiday, The rise of deepfakes poses a new trust challenge for publishers, April 29. Documented 3,165 deepfake incidents in March 2026 alone, up from four in January 2020. X accounts for 51.2% of social media deepfake distribution, more than TikTok and YouTube combined.

AFP, Elon Musk snubs Paris prosecutors' summons over X and Grok, April 20. Musk did not appear for the voluntary interview in Paris as part of the French prosecutor's investigation into CSAM and deepfake content on X's Grok platform, flagged in Issue 01 as one to watch. Prosecutors confirmed the no-show was "not an obstacle to the continuation of the investigation." The US DOJ separately declined to cooperate with the probe.

The pattern

- The WHCD deepfake and the Storm-1516 investigation represent two points on the same axis: real events generating synthetic evidence, and fictional events manufactured to look like news. In both cases the speed advantage belongs to the synthetic content, the debunk follows days later, and the correction reaches a fraction of the audience the false version did. What Bloomberg shows concretely is what happens when that asymmetry is professionalized and state-funded: output doubles year over year and the attack surface is every contested election everywhere.

- The Taylor Swift trademark filings and the American Leadership in AI Act both land this week as workarounds for the same underlying gap: the absence of a federal identity rights framework in the United States. Swift is testing trademark law as a substitute for the NO FAKES Act. Congress is trying to pass deepfake victim remedies as part of an omnibus bill. The Corinth case sits at the other end of this pattern, showing what the harm looks like when neither the law nor the institution acts quickly enough.

- EU AI Act Article 50 enforcement begins in August, requiring transparency labeling for AI-generated or manipulated content at scale. The WHCD deepfake, which is AI-enhanced rather than fully synthetic, sits in an interesting grey zone for that requirement. How regulators define "AI-generated" versus "AI-enhanced" in the enforcement context will matter considerably, and this week's incident makes that definitional question concrete for the first time.

Watching next week

- Storm-1516 EU enforcement. Following Bloomberg's investigation, watch for DSA proceedings against X to accelerate; the commission's investigation into X's handling of information manipulation is the relevant thread.

- Corinth verdict. The Childress trial is expected to continue through at least Thursday; the verdict will be an early test of how courts handle institutional accountability in AI-generated CSAM cases.

- American Leadership in AI Act movement. Watch for committee scheduling and early industry reaction to the deepfake victim remedies title specifically.

- EU AI Act Article 50 countdown. With enforcement roughly three months out, watch for platform guidance documents and early compliance announcements.

The Deepfake Watchlist publishes every Friday. Subscribe to receive it in your inbox, or follow Zohaib Ahmed on LinkedIn for the weekly social companion. Track every documented incident in the Resemble Deepfake Incident Database, and read the full methodology in our 2025 Deepfake Threat Report.