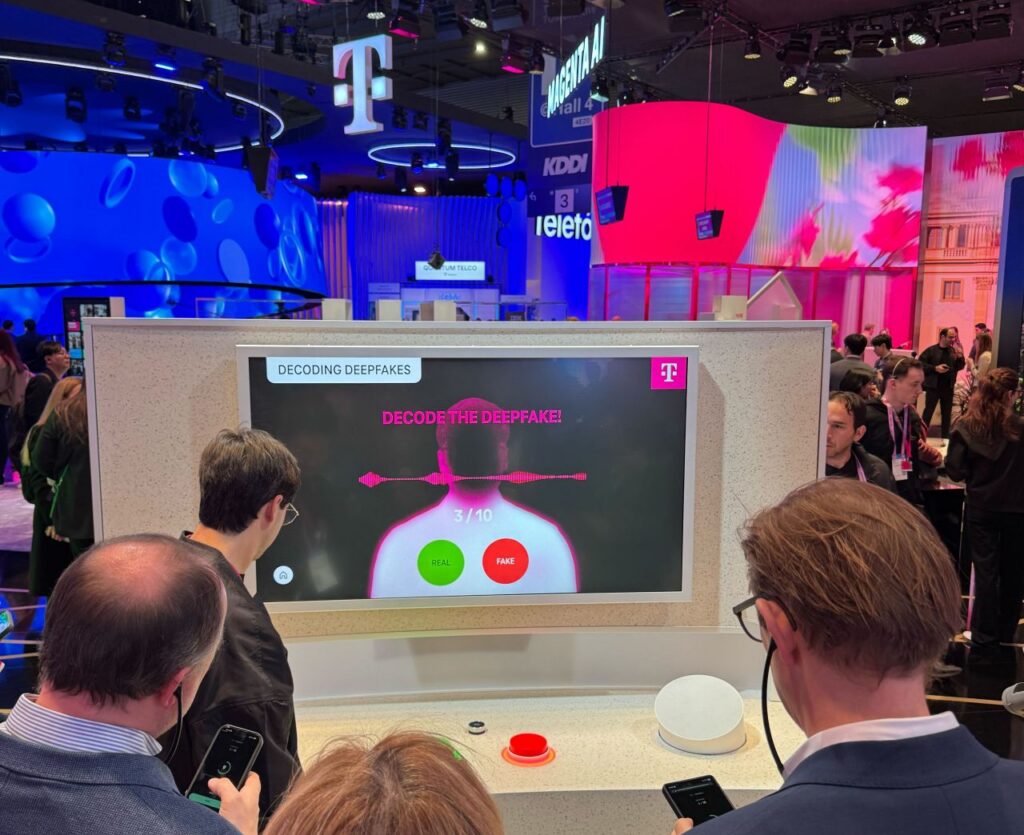

When Zohaib and Will came back from MWC Barcelona, the story they told stuck with me.

They ran a game at the booth. Played audio clips to people and asked: real voice or AI-generated? Engineers. Enterprise buyers. Security professionals. People who work in this space every day. Around 70% could not tell the difference.

I keep thinking about that.

I’ve been building in voice AI long enough to know that stat should not surprise me. But it did. Because those are exactly the people who are supposed to catch this.

The gap between real and synthetic has not just narrowed. For most practical purposes, it has closed. And if the people who are supposed to detect it can’t, the rest of us definitely can’t.

So the question we kept coming back to after Barcelona: what do you actually need to operate in a world where you cannot trust what you hear, see, or read anymore?

This is what we built in Q1.

You can't always tell what's real anymore.

I’m thinking about this more as a provenance problem than a detection problem. Not catching fakes after they spread. Proving what you made at the moment you made it.

For a while our watermarking API worked on audio only. That made sense when we first built it. It does not make sense anymore. AI-generated images and video are spreading at the same rate as audio. Conflict zones, boardrooms, political campaigns. We had not kept up.

So in Q1 the team extended it to cover images and video too. The system auto-detects file type, encodes an invisible watermark, and supports sync mode if you need the result right away. One API, three media types, no separate pipelines. Watermarking is how you prove what you made before someone makes something in your name.

Five detection updates, shipped this quarter

Watermarking protects what you generate. But a lot of synthetic media already exists with no provenance at all, and more is being created right now. That’s where detection comes in.

The research and engineering teams shipped five updates to the detection stack this quarter; these are a few that are top of mind.

Accuracy and provenance, together. Image DFD accuracy improved from F1 0.961 to 0.970. But honestly the more interesting update is reverse image search, now live in both the UI and API. Pass use_reverse_search: true in your detection request and you get back provenance data, matches against known debunked hoaxes, and a spread indicator showing how widely the image has already circulated.

This catches things pure ML models miss. Images too new to be in any training set. Synthetic media that has been spreading for days before anyone flags it. If a fake has no digital footprint, the model has nothing to compare it to. Reverse image search gives you a second layer that does not depend on prior training.

Non-face content. We extended coverage to AI-generated content without faces. We shipped this immediately when we saw the type of synthetic conflict imagery circulating on social platforms. The team turned it around in days. This was a case where being behind was not an option.

Three more worth knowing about:

- Identity now surfaces directly in the main Detect app. One request returns both a verdict and the closest matching speaker identity.

- Intelligence Reporting now runs three expert analysis layers in a single structured output: our own model, OpenAI, and Anthropic.

- Voice Activity Detection has been updated to filter non-speech content more cleanly, which means fewer false positives on audio with background noise or music mixed in.

We also shipped Zero Retention Mode. The ask from enterprise and government customers was consistent and non-negotiable: submitted media cannot be viewable after analysis. Not by our staff, not by our systems, not by anyone. Files are purged automatically after processing. If that has been the thing blocking a procurement conversation, it is not the thing anymore.

On the generation side: custom vocabulary and NVIDIA ACE

While all of this was happening, the team also shipped on the generation side.

Custom vocabulary does not get the attention it deserves. It is not a flashy feature. It is the thing that makes TTS actually usable in healthcare, pharma, and legal. The industries where getting a word wrong is not an inconvenience. It is a compliance problem. I have been pushing for this one for a while.

The old fix was retraining. Slow and expensive. The new fix: pass a short audio sample at inference time and the model corrects the pronunciation on the fly. No retraining, no pipeline changes, correct on the first pass.

And on the quality side: NVIDIA named Chatterbox in their GDC 2026 RTX developer blog as part of NVIDIA ACE, their suite for expressive on-device NPC voice in games. NPC dialogue is historically one of the first things players skip. The bar for voice that actually holds attention is high. Being named in that context matters to us.

Start here

We built detection for enterprises. But I don’t think detection should only be accessible to enterprises.

Try out @resemble_detect bot on X. It’s free, you don’t need to create an account, and there is no API key. Tag it with any image or video and get a result back. It’s the quickest way to try our deepfake detection.

Resemble AI Deepfake Detector (beta). Puts a Scan button on every image, video, and audio element on any webpage. Powered by the same detection technology used by enterprises and government agencies, now free to try. Install it, sign in to your Resemble AI account, and a Scan button appears automatically on media across Twitter/X, Reddit, Instagram, TikTok, LinkedIn, and more.

Each scan returns:

- A verdict: Authentic, AI Generated, or Uncertain

- A confidence score and rationale

- Heatmap visualization, frame-by-frame video breakdown, and per-segment audio scores

This beta release includes 4 free scans per day.

What's Next?

I’m genuinely proud of what this team shipped in one quarter. The research team turned around several model updates, one of them in direct response to synthetic conflict imagery spreading in real time. Engineers extended watermarking to three media types without breaking the existing API. The team behind the Deepfake Detector and the X bot made detection accessible to anyone with a browser.

None of this is abstract to me. I track it in Linear every day. We still have alot of work to do.

If you want to understand the full scale of what we are building against, the Deepfake Threat Report 2025 is a great place to start.