Q3 2025 marks the inflection point where deepfakes evolved from isolated threats to industrialized warfare. Attackers used real-time video deepfakes during Zoom calls to authorize fraudulent wire transfers. The WhatsApp-to-Zoom kill chain is bypassing every traditional security protocol. State-sponsored operatives are using deepfakes to secure remote employment and plant malware.

This isn’t volume growth—it’s ecosystem evolution. Our analysis provides the detection architecture and threat intelligence your organization needs before you become a case study.

Deploy within your infrastructure—from bare metal servers to air-gapped environments. Perfect for organizations requiring complete control over sensitive content analysis while maintaining the highest security standards.

An ensemble of specialized AI models, each optimized for different media types and use cases. Defend against synthetic threats regardless of the source, methods, and modality.

Identify synthetic media in under 300 milliseconds. Our real-time detection integrates with live streams and communication platforms, allowing immediate response to potential security threats before they can cause harm.

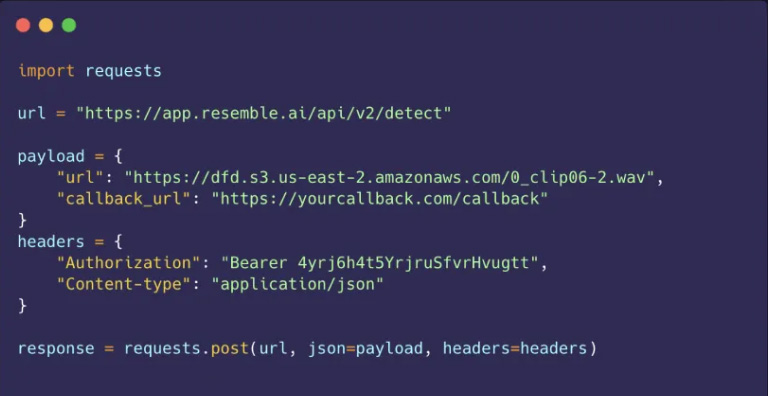

Deploy powerful deepfake protection in minutes, not months. Our straightforward API and flexible deployment options work with your existing security tools and workflows.

Talk with an expert to see how Detect by Resemble AI integrates to protect your organization against deepfake attacks.

Book a Demo