You trust your phone line. You trust your voice-mail. But those channels are under attack, and corporate employees are caught in the cross-hairs of a new wave of fraud.

According to global security research, a quarterof adults worldwide say they’ve experienced or know someone who experienced an AI voice-cloning scam, and 77%of those victims reported losing money.

What’s worse: attackers can clone a voice from just a few seconds of recorded audio and then pose as executives, colleagues, or vendors to manipulate employees into transferring funds or revealing sensitive data.

For businesses, the consequence is clear: your people are a gateway. A single phone call, sounding like the CEO, could trigger a wire transfer, leak confidential information, or bypass your best technical defenses.

Key Takeaways

- Voice cloning scams are on the rise, targeting employees through AI-generated voices that sound identical to real executives or colleagues.

- Attackers can clone a voice using just 3–10 seconds of recorded speech, often pulled from meetings, voicemails, or social media.

- Red flags include urgent requests, secrecy, unusual phrasing, and calls that bypass normal workflows, especially those involving payments or credentials.

- Prevention requires a multi-layered defense: employee awareness, verification protocols, and AI-based detection tools.

- Resemble AI’s Deepfake Detection, AI Watermarker, and Voice Enrollment solutions help organizations verify authentic voices, secure real-time communications, and train employees to spot scams before they cause damage.

What Are Voice Cloning Scams and Why They Target Employees

Voice cloning scams use AI-generated replicas of real voices to trick employees into taking harmful actions, like transferring money, sharing credentials, or revealing confidential data.

With just a few seconds of recorded speech, from a meeting, voicemail, or social post, scammers can clone a person’s voice so accurately that even colleagues can’t tell the difference.

Employees are ideal targets because they trust familiar voices, often face time pressure, and rarely have training to spot deepfake audio.

Traditional cybersecurity tools can’t detect synthetic voices because the attack happens on a human level, not a digital one. That’s why prevention now depends on a mix of training, authentication, and real-time detection.

Here’s a case study to help you understand how these scams occur in the real world and how employees can effectively tackle them.

Case Study: How a Deepfake CEO Targeted WPP Employees

A recent incident at WPP shows how quickly voice-cloning scams have evolved. According to The Guardian, attackers impersonated CEO Mark Read by cloning his voice, pairing it with a fake WhatsApp account and pre-recorded video clips to appear legitimate during a Microsoft Teams meeting. The impostor then attempted to pressure a senior employee into supporting a fraudulent “new business venture.”

The scam failed only because the employee sensed something was off, not because any technical system caught it.

Why this matters:

- The attacker used publicly available CEO voice recordings, showing how little audio is needed to craft a convincing clone.

- The scam blended audio and video deepfakes, making the impersonation harder to detect.

- Employees were targeted directly because they trust familiar voices, especially from leadership.

- Traditional cybersecurity tools offered no protection, since the attack happened through human channels, not network intrusion.

The WPP case highlights one truth: even well-trained teams can be manipulated when a scammer sounds exactly like the person they report to. It reinforces why organizations now need voice verification protocols, real-time deepfake detection, and watermarking tools to protect leadership identities and employee decision-making.

Also Read: Tips to avoid AI voice scams

What to Look for in a Voice Cloning Scam (Red Flags for Employees)

AI-generated voices are becoming nearly indistinguishable from real ones, making scams harder to detect by sound alone. Spotting a cloned voice now depends on behavioral awareness and knowing what subtle inconsistencies to look for.

Below are key red flags every employee should recognize, along with how advanced deepfake detection tools can help identify them in real time.

1. Unusual Urgency or Pressure

If a voice message demands immediate action, especially around payments, credentials, or confidential data, it’s a warning sign. Attackers often exploit urgency to make employees bypass standard checks.

Tech Tip: Real-time deepfake detection can analyze tone, cadence, and audio signatures to identify synthetic markers like modulation inconsistencies or missing natural speech artifacts, flagging suspicious calls before harm is done.

2. Inconsistent Language or Phrasing

A cloned voice may sound convincing, but subtle differences in word choice or phrasing can give it away. Vague or oddly formal language often signals something’s off.

What to Watch For:

- The speaker uses general phrases like “I need this done now” instead of precise instructions.

- The tone feels too flat or emotionless for the situation.

3. Slightly Off Audio Quality

Even advanced clones sometimes have unnatural acoustic traits — missing breaths, looping background noise, or uneven pacing.

What to Watch For:

- Background noise abruptly disappears.

- Breathing patterns sound uniform.

- The speaker’s tone doesn’t match the context.

Deepfake detection systems can scan frequency and waveform data invisible to the human ear, identifying synthetic anomalies early.

4. Requests That Bypass Normal Workflow

Scammers often insist on secrecy with phrases like “keep this confidential” or “don’t involve anyone else.” Any message that avoids standard approval channels should be treated as high risk.

Training employees to recognize these social engineering cues through AI-simulated exercises builds real-world awareness and faster response times.

5. Unknown or Spoofed Caller IDs

Caller IDs can be easily faked. Attackers use VoIP or number-masking tools to appear legitimate. Always confirm suspicious requests through an independent channel.

Best Practice: Implement voice enrollment systems that verify authorized users through secure, real-time voiceprints, ensuring only approved speakers can execute sensitive actions. If something feels off, stop and verify. Even if the voice sounds familiar, double-check via email, internal chat, or a known callback number before acting.

Modern deepfake detection tools like DETECT-2B can quietly flag suspicious audio in the background, adding an extra layer of reassurance when employees aren’t sure what, or who, they’re hearing.

Also Read: Protecting Yourself from AI Voice Cloning Scams

How to Defend Your Organization Against Voice Cloning Scams

Preventing voice-based scams requires a mix of people, processes, and technology. Here’s how to build a proactive defense strategy.

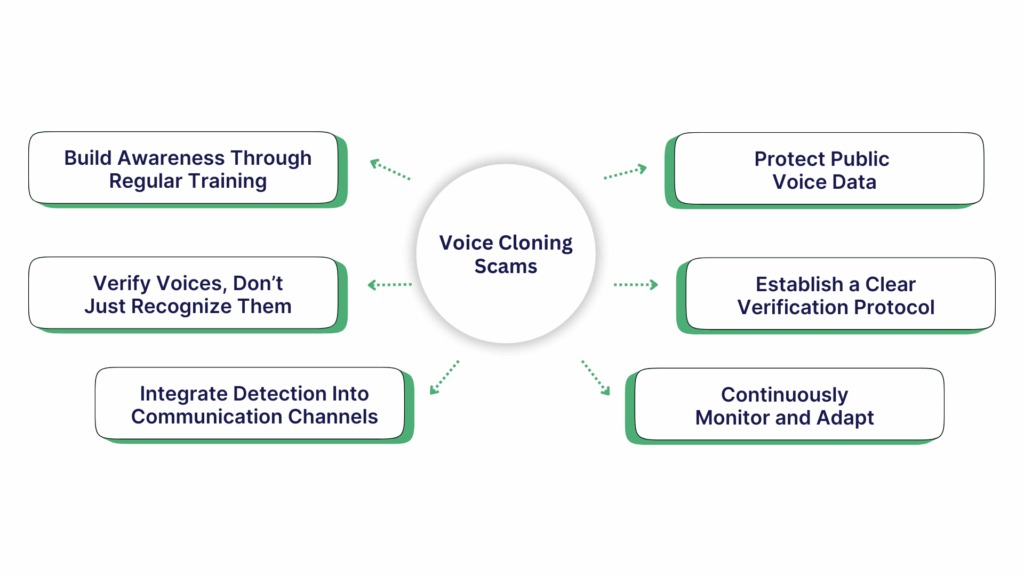

1. Build Awareness Through Regular Training

Employees are the strongest defense if they know what to look for. Many scams succeed simply because someone trusted the wrong voice.

Use simulation-based security training to safely expose staff to deepfake scenarios, improving reaction time and decision-making under pressure.

2. Verify Voices, Don’t Just Recognize Them

Familiarity isn’t security. Combine voice recognition with authentication tools that validate speaker identity through enrolled voiceprints. This protects calls, meetings, and helpdesk interactions alike.

3. Integrate Detection Into Communication Channels

Scams now happen across Zoom, Teams, and phone systems. Embedding deepfake detection into these platforms ensures real-time monitoring and automatic flagging of cloned audio.

4. Protect Public Voice Data

Executives’ voices often appear in podcasts, events, or investor calls, material that can be misused for cloning. Apply AI watermarking to all official voice content to prove authenticity and prevent tampering.

5. Establish a Clear Verification Protocol

Even with strong tools, employees must know how to respond. Require multi-channel verification for sensitive requests, set internal validation codes, and encourage a “pause and verify” culture.

6. Continuously Monitor and Adapt

Voice cloning threats evolve quickly. Conduct periodic audits using deepfake detection and audio intelligence reports to identify vulnerabilities and improve defenses.

Despite these techniques, there are a few common mistakes that organizations need to be aware of.

Common Mistakes Organizations Make, and How to Avoid Them

Despite strong security programs, many companies still leave critical gaps that make voice-cloning scams easier to pull off. These mistakes often go unnoticed until an incident occurs, as they are not typically identified in traditional cybersecurity reviews.

Here are the mistakes that matter most and how modern security teams can correct them.

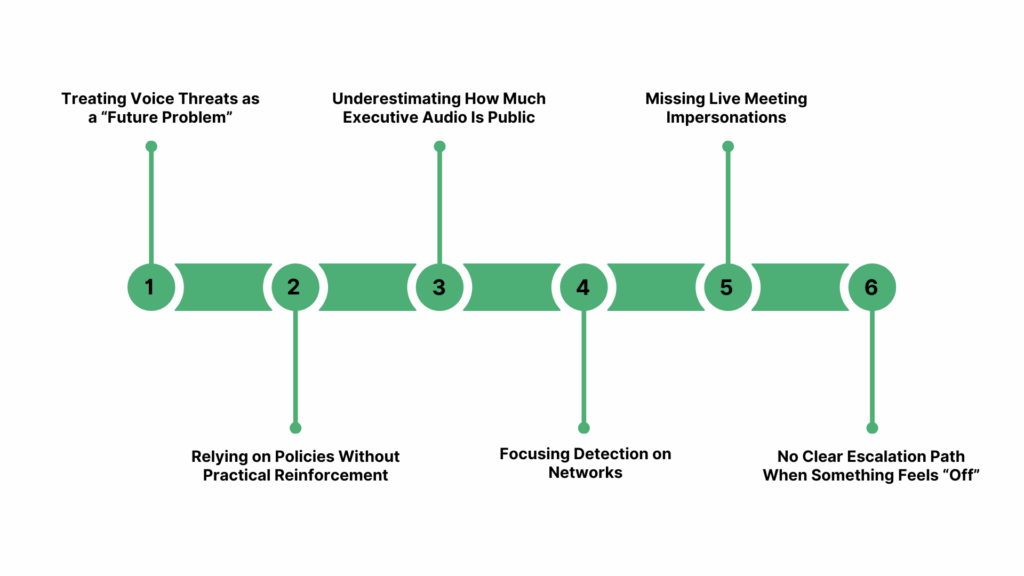

1. Treating Voice Threats as a “Future Problem”

Many leaders still assume deepfake voices are rare, experimental, or only relevant to high-profile public figures. In reality, scammers now target mid-level managers, finance teams, HR, and IT helpdesks because these employees approve transactions and handle sensitive access.

Fix it:

Shift voice fraud from an “awareness topic” to a core risk category. Add voice-based attacks to tabletop exercises, incident response plans, and executive briefings so teams learn to treat voice anomalies as seriously as phishing alerts.

2. Relying on Policies Without Practical Reinforcement

Organizations often publish verification policies but never train employees to practice them under stress. In real scams, employees panic, feel rushed, and default to trust.

Fix it:

Use scenario-based drills and internal simulations so employees can rehearse “pause and verify” habits in realistic situations. Practice reduces hesitation and builds confidence to challenge suspicious voice requests.

3. Underestimating How Much Executive Audio Is Public

Most companies don’t track where their executives’ voices appear online, keynote recordings, webinars, investor calls, podcasts, and media interviews. This becomes a massive pool of material for cloning.

Fix it:

Audit all publicly available audio of key leaders and apply AI watermarking to new official content. This makes synthetic versions easier to detect and prevents clean audio from being weaponized.

4. Focusing Detection on Networks, Not Human Channels

Traditional cybersecurity tools monitor email, endpoints, and networks, but voice-cloning scams bypass those entirely. When a scam arrives as a convincing voice call or live meeting, there is no digital signature for legacy tools to catch.

Fix it:

Adopt voice-specific security controls, such as real-time audio analysis and verification signals during sensitive conversations. These tools actively support employees during high-pressure calls.

5. Missing Live Meeting Impersonations

Most security teams protect email and messaging but overlook Zoom, Teams, Webex, and Meet as attack surfaces. Real-time impersonations, like the WPP case, are growing fast, and unmonitored meetings create blind spots.

Fix it:

Enable real-time deepfake detection within collaboration platforms so suspicious speech is flagged immediately, before an employee shares confidential details or approves a request.

6. No Clear Escalation Path When Something Feels “Off”

Employees often know something doesn’t sound right, but they don’t know who to notify or how to escalate without disrupting work. This hesitation is exactly what scammers exploit.

Fix it:

Create a simple, well-known escalation rule.

For example: “If you receive a voice request involving money, credentials, or urgency, verify through a second channel and notify Security Operations immediately.”

This helps employees act quickly without fearing they’re overreacting..

What Comes Next: The Future of AI-Powered Voice Scams

Voice cloning technology is evolving faster than most corporate security infrastructures, but the same innovation powering these scams is also driving stronger defenses. Here’s what’s next for this emerging threat landscape and how organizations can stay ahead.

1. Real-Time Deepfakes Will Become More Common

AI models can now generate and stream synthetic voices instantly, enabling fake “live” conversations. Businesses will need real-time detection tools to identify and stop these instant attacks.

2. Multilingual Deepfakes Will Target Global Teams

Next-generation voice cloning systems can replicate voices across multiple languages. A cloned English-speaking executive could soon “speak” Mandarin or Spanish fluently.

Resemble AI’s Audio Intelligence and Deepfake Detection engines analyze tone and linguistic patterns across languages, identifying cross-lingual deepfakes before they spread.

3. Deepfake Detection Will Be Built Into Collaboration Tools

Security will shift from optional add-ons to embedded capabilities. Platforms like Teams and Zoom will increasingly rely on API-based integrations, similar to Resemble AI’s Realtime Multimodal Detector, to secure voice communications natively.

4. Regulation and Compliance Standards Are Coming

Governments are expected to enforce traceable watermarking and identity verification for synthetic media. Organizations adopting ethical watermarking tools today, such as Resemble AI’s AI Watermarker, will be well-positioned for compliance as regulations tighten.

5. Voice Authentication Will Replace Passwords

As deepfake detection matures, verified voiceprints will become a standard form of secure identity verification. Resemble AI’s Voice Enrollment for Identity Protection provides that foundation, safeguarding both employees and customers.

Conclusion

AI-driven voice scams are no longer rare; they are a daily business risk. The solution isn’t to fear the technology, but to use stronger, more transparent AI to counter malicious uses.

By combining employee awareness, verified voice authentication, and real-time deepfake detection, organizations can stop impersonation attempts before damage occurs.

Resemble AI provides a scalable, enterprise-ready defense through:

- Deepfake Detection and Realtime Multimodal Detection for meetings and calls.

- AI Watermarker for protecting authentic voice data.

- Voice Enrollment for Identity Protection to confirm speaker authenticity.

- Gen AI–based Security Awareness Training to keep employees alert and resilient.

In the age of cloned voices, trust isn’t about who you hear; it’s about what your systems can verify.

Strengthen your organization’s voice security.Book a free demo with Resemble AI.

Frequently Asked Questions

1. How can I tell if a voice message or call is cloned?

AI-generated voices sound almost human, so detection relies on context, not just tone. Be cautious of calls that create urgency, skip protocol, or sound slightly “off.”

2. Can AI really clone someone’s voice from just a short clip?

Yes. With modern voice synthesis tools, scammers need as little as 5 seconds of recorded speech to mimic someone’s voice with high accuracy. That’s why it’s critical to protect your voice data and apply AI Watermarking to official audio.

3. What should employees do if they suspect a cloned-voice call?

Pause the conversation immediately and verify through another channel, email, internal chat, or direct callback using a verified number.

Report the incident to IT or your security team for analysis. Organizations using Realtime Multimodal Detection can also log and trace suspicious audio automatically.

4. How can companies protect their employees from these scams?

Start with awareness training, then add verification protocols and AI detection systems. Resemble AI’s Gen AI–based Security Awareness Training helps teams recognize deepfake tactics safely, while Voice Enrollment for Identity Protection confirms who’s really speaking during sensitive actions.

5. Can deepfake detection work on live meetings?

Yes. Tools like Resemble AI’s Realtime Multimodal Deepfake Detector analyze live audio on Zoom, Teams, Webex, and Meet, detecting synthetic voices before they’re acted upon.

6. What’s the long-term solution to voice cloning scams?

Building a trusted voice ecosystem, where every legitimate voice is authenticated, watermarked, and verifiable.

With AI Watermarker and Voice Enrollment, organizations can ensure that every voice interaction inside the company can be traced and trusted.